Presentation of the user testing

It is a method of assessment of a service involving users interacting directly with the interface. This GUI can be reduced to a prototype whose status varies according to the progress of the project. This will determine the choice of method for user testing :

- Perception testing : This testing verifies that the participant can identify the functions assigned to the different areas, buttons and controls of the interface. This ensures that the “vocabulary” used is comprehensible to the participant. This type of testing can be based on a “paper prototype” (i.e. offline, but a presentation on screen can be used to give a more accurate rendering of the colours of the interface).

- Usability testing : It consists in the realization of a set of tasks, more or less guided. They must be realistic, based on known or proven uses. The observation of users in a situation allows us to identify their difficulties, astonishment, misunderstandings and opinions about the interaction.

- Benchmarking : If several versions of the application are evaluated, the user test can be used to check which version of the interface proves to be the most efficient.

Already used in ergonomics, user testing is THE method to evaluate the usability of an interface. Although it is fundamentally a qualitative method, it is nonetheless a tool that allows us to objectively evaluate a dimension of the user experience.

Preparation

User testing is a truly user-centric method. Their implementation yields results because they are reliable. However, they require some preparation.

Sampling

Participants must be potential end users, otherwise the test loses its value. Surveys, polls, field observations or market research, an attempt should be made to assemble a panel that is as representative as possible. The selection of the panel may be based on criteria of intent to use the type of product in question or on variables such as gender or age.

In practice, for example, a pre-questionnaire will be used to ascertain the characteristics of the participants (e.g. level of previous expertise in the use of the product). Studies have shown that attitudes towards technology or computing have an effect on mental workload and performance. It is therefore interesting to take these factors into consideration.

It is regularly argued in the literature that five participants are sufficient to conduct a usability test. In fact, Nielsen has shown that with five well-chosen users, 80% of usability problems can be detected. Beyond five, not many new problems are discovered. However, if the user test aims to compare several interfaces with each other in a hypothesis test, then as many subjects will be needed as required for their distribution in several groups (corresponding to different types of users or interfaces).

Test scenario

The development of the test scenario and the tasks to be performed by the testers will be of utmost importance. One can be very directive, in doing so, the participant uses the functions that one wants. Does this mean that what is imposed on him or her will match the future use he or she will make of it? You have to make sure beforehand. Conversely, free exploration may lead the subject to neglect certain functions for lack of specific goals… This refers to the specification of the context: on what occasions, why, and for what purposes will the end user make use of the service being evaluated?

Prototyping

Prior to any user test, prototyping is of particular importance. Indeed, the user testing takes all its meaning during the design of the product or service. It allows the product or service to be evaluated during its design, and even before it is perfectly completed, so that modifications are still possible. These modifications can also be tested again until the software is judged satisfactory. The problem with this approach is to be able to submit an interface to the user that is as close as possible to the finished product, when it is not yet. The difficulty lies therefore in presenting a prototype that can be tested, but that can be easily transformed if problems are detected. This is what prototyping is all about. Many tools can facilitate this work.

Protocol

Proceeding

“Classically”, the user testing proceeds as follows:

Participants are individually interviewed by the ux researcher, who will conduct an interview course in order to gather useful biographical information regarding experience using the interface (if applicable), age, computer experience, etc. The testers will also be asked to provide a brief description of their experience. It is during this stage that the consent of the participants will have to be collected, particularly with regard to the recording of the session (video, audio and personal information, retention time). Similarly, the confidentiality of the exchanges must be guaranteed to allow them to express themselves freely.

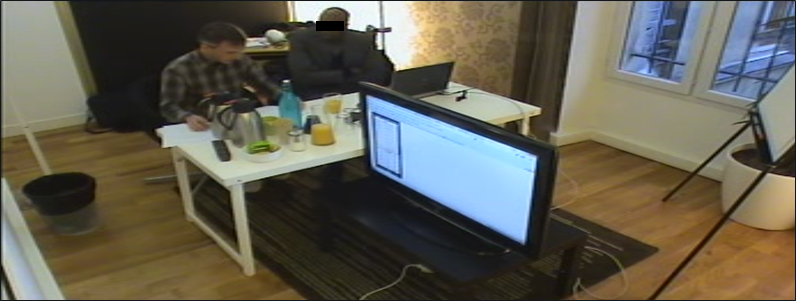

The researcher then places hiself next to the participant, slightly backwards, so as to be able to witness his or her interaction with the service being evaluated, as illustrated below :

However, new tools such as Lookback now make it possible to conduct tests remotely (tester and participants being physically distant), or even unmoderated (the participant takes the test alone by following a set of instructions defined in advance by the researcher). In the latter case, the participant will, if necessary, enter his personal information from an input interface provided for this purpose.

After this first step, the participants start the test. It is important to let the participants know that it is not they are not the ones being evaluated, but the interface. As such, a mistake made is an error in the design of the service, not a mistake on their part.

Regardless of how the test is conducted (face-to-face or remote, moderate or not), the most important thing is to ensure that the test does not influence the participants:

- Do not suggest any behaviour or action to them with the service.

- Do not suggest answers to questions (for example, “What do you think?”). “rather than “don’t you think it’s good? »)

Evaluations and measures

Perception

This type of test makes it possible to estimate, in addition to comprehension, a certain form of satisfaction in the aesthetics of the interface. This is all the more important as colours have an essential role in the construction of pictograms, icons and other navigational markers that would lose their meaning in their absence. This ensures internal consistency in drop-down menus and other hyperlinks so that the user intuitively finds what he is looking for where he is looking for it.

Objectivity & exhaustiveness

This method of user testing has the great advantage of objectivity, as opposed to audit methods. It will allow us to concretely identify ergonomic problems. It makes it possible to measure usability by evaluating the performance of the participants. The test can be carried out both in the test room and in the field, with the advantages and disadvantages that these study locations induce (more or less control for more or less context).

This gives rise to different ways of collecting information, depending on the type of task, the problems to be detected, and the characteristics of the prototype under test:

- Some testing techniques are very directive and intrusive:

- Tester-guided exploration,

- Learning by co-discovery,

- and so on

- Others less :

- External vocalization (the participant thinks aloud everything he does and everything that goes through his or her head),

- Some not at all:

- Remote observation and recording,

- Performance measurement,

- Delayed hindsight (the user comments on everything he has done and thought after interacting with the service).

Measurements

Among the data that can be collected is firstly a video recording of the face of the participants interacting with the interface (also recorded). This is now possible with all types of devices, desktop, laptop and smartphone. The use of software such as Morae or Lookback allows the user’s face to be integrated in PIP (picture in picture) to the dynamic capture of the interface. Thus, we synchronize within a file the facial analysis of emotions to the realization of tasks interacting with the interface.

One will also think of the recording of eye movements, the famous “eye-tracker“, whose usefulness with regard to the cost of implementation is questionable.

In a more pragmatic way, the ISO 9241-11 standard proposes to evaluate the usability of an interface by different measurements. They can be, for example :

Effectiveness : quality of the results produced by the interaction

- Successes / trial and error / failures (qualitative measurement),

- Approximate time to complete a task (quantitative measure),

- Characterization of navigational errors (qualitative measurement).

Efficiency : linking result to effort

- Relationship between efficiency and satisfaction, subjective mental load or time spent.

Satisfaction : user feedback on the interface

- Analysis of facial expressions (qualitative measure),

- Questionnaire with satisfaction scales (quantitative measurement).

Finally, after each type of task, or at the end of the user test, the participants can answer questions that allow them to bring out their representations of the interaction or simply a satisfaction questionnaire. Several types of questions can be asked. They can concern different dimensions of the interface evaluation :

- Perceived ease of use,

- Subjective mental load,

- A function or element of interaction (What do you think about access to this type of information?),

- As well as the intent of use (Would you use this application if it were available?).

What’s next?

It is necessary to iterate, i.e. take into consideration, as much as possible, each problem or difficulty identified, even for a single user, and provide a solution in a future version of the interface. Indeed, this method is qualitative, and one cannot infer from the proportion of participants affected by a problem its incidence in the target population. Each usability problem detected must therefore be addressed.